|

A while back, I tasked one of my team members to update the NTP servers used in one of our datacenters. We were using standard pool NTP services and decided to move away from them for various reasons. We found that stable time was more important than accurate time, and the pools definitely didn't add stability. NTP uses UDP by default, and we wanted to turn off/ACL-off UDP in certain networks. So we grabbed a few CDMA-based time servers off of Ebay, fronted them with our typical Juniper SRX firewalls, and set up clients to use the SRX's as time sources. After setting up a few devices, this employee suggested, "Hey why don't we set this up on a loopback and anycast it?" I thought about it for a second, something else came up, we moved on, and the suggestion was forgotten (by both of us). We had not finished moving everything from flat, layer 2 networks to a true Clos L3LS setup, so the timing wasn't just right. After finishing the L3LS migration, I looked at this again......and we're very happy with the results. The idea of BGP anycasting isn't novel. CDN's like BitGravity proved it worked with TCP as well as UDP. It's a well accepted method of distributing traffic across geographic regions on the Internet. But what about inside your own datacenter? It works just as well if not better! The idea is very simple:

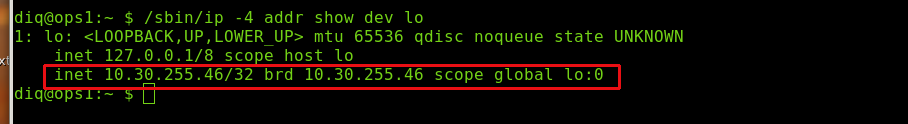

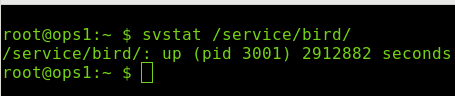

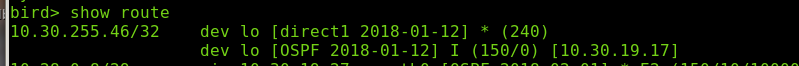

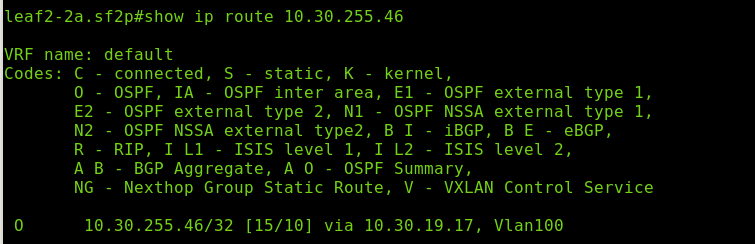

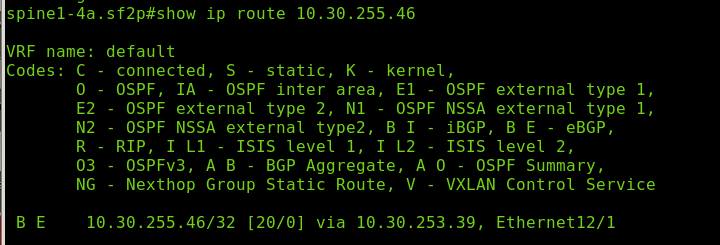

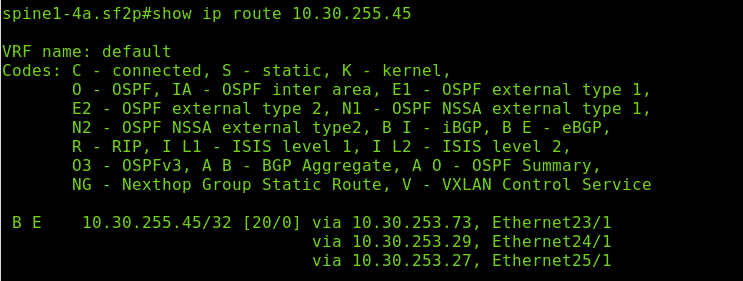

As you can see, it's not terribly complex in operation. It works extremely well, even across datacenters. Use the same loopback IP value across all of your datacenters for a service (here, NTP) and your clients (and routers) will automatically send you to the closest one. If the service in your DC goes down for whatever reason, you'll be routed to another DC and everything keeps humming along. We do this for stuff like NTP and DNS, as well as services like Postgres and Ceph. Here's a shot of our Ceph RGW's with 3 ECMP routes: For simplicity, we chose to use Bird and OSPF as the route advertisement mechanism. Zero knowledge and setup is required there. Just fire up OSPF on 0.0.0.0 as you only run OSPF inside the rack. The ToR switch exports OSPF routes into the BGP L3 Leaf-Spine mesh, but no OSPF is used in the Leaf-Spine level. This cuts down on the administrative overhead required to use BGP inside the rack.

I realize that this post isn't novel or groundbreaking to many, but I wanted to share what we found with everyone.

papajwl

2/15/2018 12:30:34 pm

The simplicity is brilliant. Nice write up. 4/9/2018 10:20:58 pm

Very Nice site thanks a lot Really amazing site I will visit again this lovely site Thanks <3 Comments are closed.

|

AuthorA NOLA native just trying to get by. I live in San Francisco and work as a digital plumber for the joint that runs this thing. (Square/Weebly) Thoughts are mine, not my company's. Archives

May 2021

Categories

All

|

RSS Feed

RSS Feed